Michael Berger has written another of his Nanowerk Spotlight articles focussing on neuromorphic engineering and the concept of a brain-on-a-chip bringing it up-to-date April 2014 style.

It’s a topic he and I have been following (separately) for years. Berger’s April 4, 2014 Brain-on-a-chip Spotlight article provides a very welcome overview of the international neuromorphic engineering effort (Note: Links have been removed),

Constructing realistic simulations of the human brain is a key goal of the Human Brain Project, a massive European-led research project that commenced in 2013.

The Human Brain Project is a large-scale, scientific collaborative project, which aims to gather all existing knowledge about the human brain, build multi-scale models of the brain that integrate this knowledge and use these models to simulate the brain on supercomputers. The resulting “virtual brain” offers the prospect of a fundamentally new and improved understanding of the human brain, opening the way for better treatments for brain diseases and for novel, brain-like computing technologies.

Several years ago, another European project named FACETS (Fast Analog Computing with Emergent Transient States) completed an exhaustive study of neurons to find out exactly how they work, how they connect to each other and how the network can ‘learn’ to do new things. One of the outcomes of the project was PyNN, a simulator-independent language for building neuronal network models.

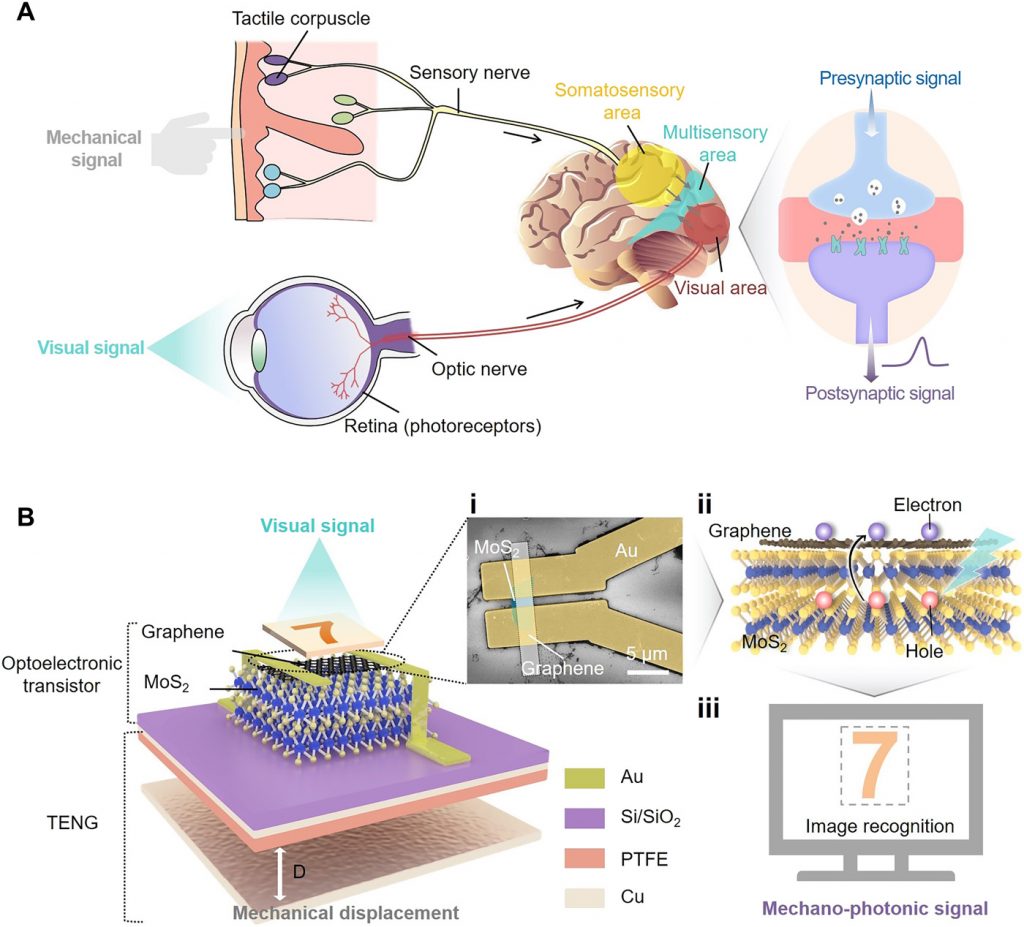

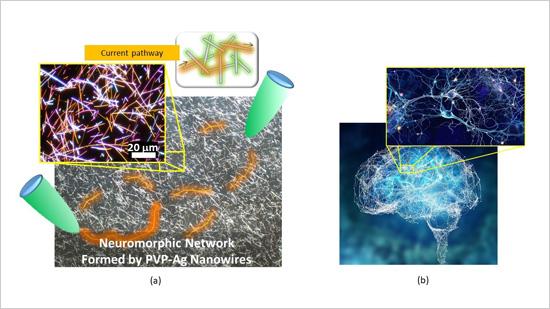

Scientists have great expectations that nanotechnologies will bring them closer to the goal of creating computer systems that can simulate and emulate the brain’s abilities for sensation, perception, action, interaction and cognition while rivaling its low power consumption and compact size – basically a brain-on-a-chip. Already, scientists are working hard on laying the foundations for what is called neuromorphic engineering – a new interdisciplinary discipline that includes nanotechnologies and whose goal is to design artificial neural systems with physical architectures similar to biological nervous systems.

Several research projects funded with millions of dollars are at work with the goal of developing brain-inspired computer architectures or virtual brains: DARPA’s SyNAPSE, the EU’s BrainScaleS (a successor to FACETS), or the Blue Brain project (one of the predecessors of the Human Brain Project) at Switzerland’s EPFL [École Polytechnique Fédérale de Lausanne].

Berger goes on to describe the raison d’être for neuromorphic engineering (attempts to mimic biological brains),

Programmable machines are limited not only by their computational capacity, but also by an architecture requiring (human-derived) algorithms to both describe and process information from their environment. In contrast, biological neural systems (e.g., brains) autonomously process information in complex environments by automatically learning relevant and probabilistically stable features and associations. Since real world systems are always many body problems with infinite combinatorial complexity, neuromorphic electronic machines would be preferable in a host of applications – but useful and practical implementations do not yet exist.

Researchers are mostly interested in emulating neural plasticity (aka synaptic plasticity), from Berger’s April 4, 2014 article,

Independent from military-inspired research like DARPA’s, nanotechnology researchers in France have developed a hybrid nanoparticle-organic transistor that can mimic the main functionalities of a synapse. This organic transistor, based on pentacene and gold nanoparticles and termed NOMFET (Nanoparticle Organic Memory Field-Effect Transistor), has opened the way to new generations of neuro-inspired computers, capable of responding in a manner similar to the nervous system (read more: “Scientists use nanotechnology to try building computers modeled after the brain”).

One of the key components of any neuromorphic effort, and its starting point, is the design of artificial synapses. Synapses dominate the architecture of the brain and are responsible for massive parallelism, structural plasticity, and robustness of the brain. They are also crucial to biological computations that underlie perception and learning. Therefore, a compact nanoelectronic device emulating the functions and plasticity of biological synapses will be the most important building block of brain-inspired computational systems.

…

In 2011, a team at Stanford University demonstrates a new single element nanoscale device, based on the successfully commercialized phase change material technology, emulating the functionality and the plasticity of biological synapses. In their work, the Stanford team demonstrated a single element electronic synapse with the capability of both the modulation of the time constant and the realization of the different synaptic plasticity forms while consuming picojoule level energy for its operation (read more: “Brain-inspired computing with nanoelectronic programmable synapses”).

Berger does mention memristors but not in any great detail in this article,

Researchers have also suggested that memristor devices are capable of emulating the biological synapses with properly designed CMOS neuron components. A memristor is a two-terminal electronic device whose conductance can be precisely modulated by charge or flux through it. It has the special property that its resistance can be programmed (resistor) and subsequently remains stored (memory).

One research project already demonstrated that a memristor can connect conventional circuits and support a process that is the basis for memory and learning in biological systems (read more: “Nanotechnology’s road to artificial brains”).

You can find a number of memristor articles here including these: Memristors have always been with us from June 14, 2013; How to use a memristor to create an artificial brain from Feb. 26, 2013; Electrochemistry of memristors in a critique of the 2008 discovery from Sept. 6, 2012; and many more (type ‘memristor’ into the blog search box and you should receive many postings or alternatively, you can try ‘artificial brains’ if you want everything I have on artificial brains).

Getting back to Berger’s April 4, 2014 article, he mentions one more approach and this one stands out,

A completely different – and revolutionary – human brain model has been designed by researchers in Japan who introduced the concept of a new class of computer which does not use any circuit or logic gate. This artificial brain-building project differs from all others in the world. It does not use logic-gate based computing within the framework of Turing. The decision-making protocol is not a logical reduction of decision rather projection of frequency fractal operations in a real space, it is an engineering perspective of Gödel’s incompleteness theorem.

Berger wrote about this work in much more detail in a Feb. 10, 2014 Nanowerk Spotlight article titled: Brain jelly – design and construction of an organic, brain-like computer, (Note: Links have been removed),

In a previous Nanowerk Spotlight we reported on the concept of a full-fledged massively parallel organic computer at the nanoscale that uses extremely low power (“Will brain-like evolutionary circuit lead to intelligent computers?”). In this work, the researchers created a process of circuit evolution similar to the human brain in an organic molecular layer. This was the first time that such a brain-like ‘evolutionary’ circuit had been realized.

The research team, led by Dr. Anirban Bandyopadhyay, a senior researcher at the Advanced Nano Characterization Center at the National Institute of Materials Science (NIMS) in Tsukuba, Japan, has now finalized their human brain model and introduced the concept of a new class of computer which does not use any circuit or logic gate.

…

In a new open-access paper published online on January 27, 2014, in Information (“Design and Construction of a Brain-Like Computer: A New Class of Frequency-Fractal Computing Using Wireless Communication in a Supramolecular Organic, Inorganic System”), Bandyopadhyay and his team now describe the fundamental computing principle of a frequency fractal brain like computer.

“Our artificial brain-building project differs from all others in the world for several reasons,” Bandyopadhyay explains to Nanowerk. He lists the four major distinctions:

1) We do not use logic gate based computing within the framework of Turing, our decision-making protocol is not a logical reduction of decision rather projection of frequency fractal operations in a real space, it is an engineering perspective of Gödel’s incompleteness theorem.

2) We do not need to write any software, the argument and basic phase transition for decision-making, ‘if-then’ arguments and the transformation of one set of arguments into another self-assemble and expand spontaneously, the system holds an astronomically large number of ‘if’ arguments and its associative ‘then’ situations.

3) We use ‘spontaneous reply back’, via wireless communication using a unique resonance band coupling mode, not conventional antenna-receiver model, since fractal based non-radiative power management is used, the power expense is negligible.

4) We have carried out our own single DNA, single protein molecule and single brain microtubule neurophysiological study to develop our own Human brain model.

I encourage people to read Berger’s articles on this topic as they provide excellent information and links to much more. Curiously (mind you, it is easy to miss something), he does not mention James Gimzewski’s work at the University of California at Los Angeles (UCLA). Working with colleagues from the National Institute for Materials Science in Japan, Gimzewski published a paper about “two-, three-terminal WO3-x-based nanoionic devices capable of a broad range of neuromorphic and electrical functions”. You can find out more about the paper in my Dec. 24, 2012 posting titled: Synaptic electronics.

As for the ‘brain jelly’ paper, here’s a link to and a citation for it,

Design and Construction of a Brain-Like Computer: A New Class of Frequency-Fractal Computing Using Wireless Communication in a Supramolecular Organic, Inorganic System by Subrata Ghoshemail, Krishna Aswaniemail, Surabhi Singhemail, Satyajit Sahuemail, Daisuke Fujitaemail and Anirban Bandyopadhyay. Information 2014, 5(1), 28-100; doi:10.3390/info5010028

It’s an open access paper.

As for anyone who’s curious about why the US BRAIN initiative ((Brain Research through Advancing Innovative Neurotechnologies, also referred to as the Brain Activity Map Project) is not mentioned, I believe that’s because it’s focussed on biological brains exclusively at this point (you can check its Wikipedia entry to confirm).

Anirban Bandyopadhyay was last mentioned here in a January 16, 2014 posting titled: Controversial theory of consciousness confirmed (maybe) in the context of a presentation in Amsterdam, Netherlands.