Researchers at Stanford University (California, US) believe they have a solution for a problem with neuroprosthetics (Note: I have included brief comments about neuroprosthetics and possible ethical issues at the end of this posting) according an August 5, 2020 news item on ScienceDaily,

The current generation of neural implants record enormous amounts of neural activity, then transmit these brain signals through wires to a computer. But, so far, when researchers have tried to create wireless brain-computer interfaces to do this, it took so much power to transmit the data that the implants generated too much heat to be safe for the patient. A new study suggests how to solve his problem — and thus cut the wires.

…

An August 3, 2020 Stanford University news release (also on EurekAlert but published August 4, 2020) by Tom Abate, which originated the news item, details the problem and the proposed solution,

Stanford researchers have been working for years to advance a technology that could one day help people with paralysis regain use of their limbs, and enable amputees to use their thoughts to control prostheses and interact with computers.

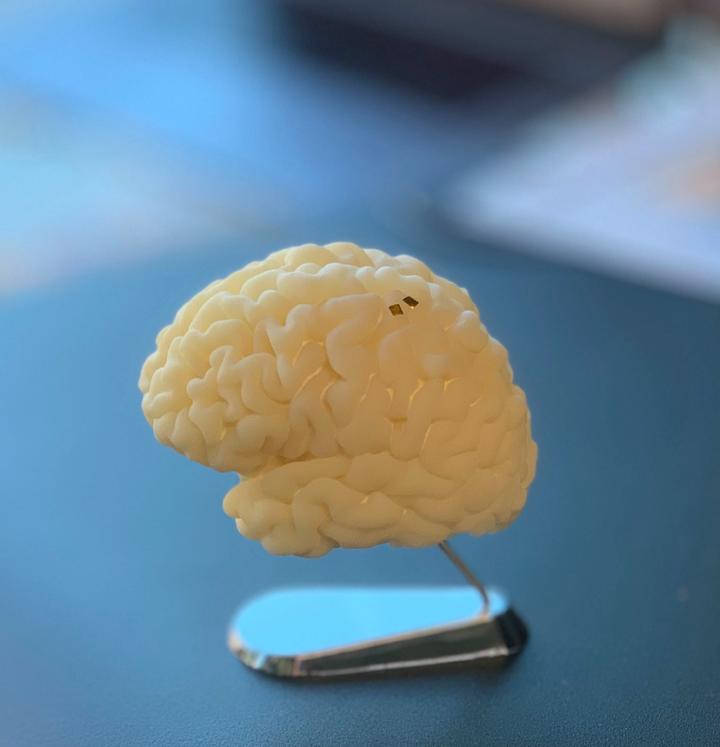

The team has been focusing on improving a brain-computer interface, a device implanted beneath the skull on the surface of a patient’s brain. This implant connects the human nervous system to an electronic device that might, for instance, help restore some motor control to a person with a spinal cord injury, or someone with a neurological condition like amyotrophic lateral sclerosis, also called Lou Gehrig’s disease.

The current generation of these devices record enormous amounts of neural activity, then transmit these brain signals through wires to a computer. But when researchers have tried to create wireless brain-computer interfaces to do this, it took so much power to transmit the data that the devices would generate too much heat to be safe for the patient.

Now, a team led by electrical engineers and neuroscientists Krishna Shenoy, PhD, and Boris Murmann, PhD, and neurosurgeon and neuroscientist Jaimie Henderson, MD, have shown how it would be possible to create a wireless device, capable of gathering and transmitting accurate neural signals, but using a tenth of the power required by current wire-enabled systems. These wireless devices would look more natural than the wired models and give patients freer range of motion.

Graduate student Nir Even-Chen and postdoctoral fellow Dante Muratore, PhD, describe the team’s approach in a Nature Biomedical Engineering paper.

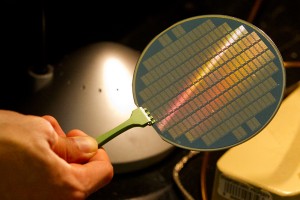

The team’s neuroscientists identified the specific neural signals needed to control a prosthetic device, such as a robotic arm or a computer cursor. The team’s electrical engineers then designed the circuitry that would enable a future, wireless brain-computer interface to process and transmit these these carefully identified and isolated signals, using less power and thus making it safe to implant the device on the surface of the brain.

To test their idea, the researchers collected neuronal data from three nonhuman primates and one human participant in a (BrainGate) clinical trial.

As the subjects performed movement tasks, such as positioning a cursor on a computer screen, the researchers took measurements. The findings validated their hypothesis that a wireless interface could accurately control an individual’s motion by recording a subset of action-specific brain signals, rather than acting like the wired device and collecting brain signals in bulk.

The next step will be to build an implant based on this new approach and proceed through a series of tests toward the ultimate goal.

Here’s a link to and a citation for the paper,

Power-saving design opportunities for wireless intracortical brain–computer interfaces by Nir Even-Chen, Dante G. Muratore, Sergey D. Stavisky, Leigh R. Hochberg, Jaimie M. Henderson, Boris Murmann & Krishna V. Shenoy. Nature Biomedical Engineering (2020) DOI: https://doi.org/10.1038/s41551-020-0595-9 Published: 03 August 2020

This paper is behind a paywall.

Comments about ethical issues

As I found out while investigating, ethical issues in this area abound. My first thought was to look at how someone with a focus on ability studies might view the complexities.

My ‘go to’ resource for human enhancement and ethical issues is Gregor Wolbring, an associate professor at the University of Calgary (Alberta, Canada). his profile lists these areas of interest: ability studies, disability studies, governance of emerging and existing sciences and technologies (e.g. neuromorphic engineering, genetics, synthetic biology, robotics, artificial intelligence, automatization, brain machine interfaces, sensors) and more.

I can’t find anything more recent on this particular topic but I did find an August 10, 2017 essay for The Conversation where he comments on technology and human enhancement ethical issues where the technology is gene-editing. Regardless, he makes points that are applicable to brain-computer interfaces (human enhancement), Note: Links have been removed),

…

Ability expectations have been and still are used to disable, or disempower, many people, not only people seen as impaired. They’ve been used to disable or marginalize women (men making the argument that rationality is an important ability and women don’t have it). They also have been used to disable and disempower certain ethnic groups (one ethnic group argues they’re smarter than another ethnic group) and others.

…

A recent Pew Research survey on human enhancement revealed that an increase in the ability to be productive at work was seen as a positive. What does such ability expectation mean for the “us” in an era of scientific advancements in gene-editing, human enhancement and robotics?

Which abilities are seen as more important than others?

The ability expectations among “us” will determine how gene-editing and other scientific advances will be used.

And so how we govern ability expectations, and who influences that governance, will shape the future. Therefore, it’s essential that ability governance and ability literacy play a major role in shaping all advancements in science and technology.

One of the reasons I find Gregor’s commentary so valuable is that he writes lucidly about ability and disability as concepts and poses what can be provocative questions about expectations and what it is to be truly abled or disabled. You can find more of his writing here on his eponymous (more or less) blog.

Ethics of clinical trials for testing brain implants

This October 31, 2017 article by Emily Underwood for Science was revelatory,

In 2003, neurologist Helen Mayberg of Emory University in Atlanta began to test a bold, experimental treatment for people with severe depression, which involved implanting metal electrodes deep in the brain in a region called area 25 [emphases mine]. The initial data were promising; eventually, they convinced a device company, St. Jude Medical in Saint Paul, to sponsor a 200-person clinical trial dubbed BROADEN.

This month [October 2017], however, Lancet Psychiatry reported the first published data on the trial’s failure. The study stopped recruiting participants in 2012, after a 6-month study in 90 people failed to show statistically significant improvements between those receiving active stimulation and a control group, in which the device was implanted but switched off.

…

… a tricky dilemma for companies and research teams involved in deep brain stimulation (DBS) research: If trial participants want to keep their implants [emphases mine], who will take responsibility—and pay—for their ongoing care? And participants in last week’s meeting said it underscores the need for the growing corps of DBS researchers to think long-term about their planned studies.

…

… participants bear financial responsibility for maintaining the device should they choose to keep it, and for any additional surgeries that might be needed in the future, Mayberg says. “The big issue becomes cost [emphasis mine],” she says. “We transition from having grants and device donations” covering costs, to patients being responsible. And although the participants agreed to those conditions before enrolling in the trial, Mayberg says she considers it a “moral responsibility” to advocate for lower costs for her patients, even it if means “begging for charity payments” from hospitals. And she worries about what will happen to trial participants if she is no longer around to advocate for them. “What happens if I retire, or get hit by a bus?” she asks.

…

There’s another uncomfortable possibility: that the hypothesis was wrong [emphases mine] to begin with. A large body of evidence from many different labs supports the idea that area 25 is “key to successful antidepressant response,” Mayberg says. But “it may be too simple-minded” to think that zapping a single brain node and its connections can effectively treat a disease as complex as depression, Krakauer [John Krakauer, a neuroscientist at Johns Hopkins University in Baltimore, Maryland] says. Figuring that out will likely require more preclinical research in people—a daunting prospect that raises additional ethical dilemmas, Krakauer says. “The hardest thing about being a clinical researcher,” he says, “is knowing when to jump.”

…

Brain-computer interfaces, symbiosis, and ethical issues

This was the most recent and most directly applicable work that I could find. From a July 24, 2019 article by Liam Drew for Nature Outlook: The brain,

“It becomes part of you,” Patient 6 said, describing the technology that enabled her, after 45 years of severe epilepsy, to halt her disabling seizures. Electrodes had been implanted on the surface of her brain that would send a signal to a hand-held device when they detected signs of impending epileptic activity. On hearing a warning from the device, Patient 6 knew to take a dose of medication to halt the coming seizure.

“You grow gradually into it and get used to it, so it then becomes a part of every day,” she told Frederic Gilbert, an ethicist who studies brain–computer interfaces (BCIs) at the University of Tasmania in Hobart, Australia. “It became me,” she said. [emphasis mine]

Gilbert was interviewing six people who had participated in the first clinical trial of a predictive BCI to help understand how living with a computer that monitors brain activity directly affects individuals psychologically1. Patient 6’s experience was extreme: Gilbert describes her relationship with her BCI as a “radical symbiosis”.

Symbiosis is a term, borrowed from ecology, that means an intimate co-existence of two species for mutual advantage. As technologists work towards directly connecting the human brain to computers, it is increasingly being used to describe humans’ potential relationship with artificial intelligence.

Interface technologies are divided into those that ‘read’ the brain to record brain activity and decode its meaning, and those that ‘write’ to the brain to manipulate activity in specific regions and affect their function.

Commercial research is opaque, but scientists at social-media platform Facebook are known to be pursuing brain-reading techniques for use in headsets that would convert users’ brain activity into text. And neurotechnology companies such as Kernel in Los Angeles, California, and Neuralink, founded by Elon Musk in San Francisco, California, predict bidirectional coupling in which computers respond to people’s brain activity and insert information into their neural circuitry. [emphasis mine]

…

Already, it is clear that melding digital technologies with human brains can have provocative effects, not least on people’s agency — their ability to act freely and according to their own choices. Although neuroethicists’ priority is to optimize medical practice, their observations also shape the debate about the development of commercial neurotechnologies.

…

Neuroethicists began to note the complex nature of the therapy’s side effects. “Some effects that might be described as personality changes are more problematic than others,” says Maslen [Hannah Maslen, a neuroethicist at the University of Oxford, UK]. A crucial question is whether the person who is undergoing stimulation can reflect on how they have changed. Gilbert, for instance, describes a DBS patient who started to gamble compulsively, blowing his family’s savings and seeming not to care. He could only understand how problematic his behaviour was when the stimulation was turned off.

Such cases present serious questions about how the technology might affect a person’s ability to give consent to be treated, or for treatment to continue. [emphases mine] If the person who is undergoing DBS is happy to continue, should a concerned family member or doctor be able to overrule them? If someone other than the patient can terminate treatment against the patient’s wishes, it implies that the technology degrades people’s ability to make decisions for themselves. It suggests that if a person thinks in a certain way only when an electrical current alters their brain activity, then those thoughts do not reflect an authentic self.

…

To observe a person with tetraplegia bringing a drink to their mouth using a BCI-controlled robotic arm is spectacular. [emphasis mine] This rapidly advancing technology works by implanting an array of electrodes either on or in a person’s motor cortex — a brain region involved in planning and executing movements. The activity of the brain is recorded while the individual engages in cognitive tasks, such as imagining that they are moving their hand, and these recordings are used to command the robotic limb.

If neuroscientists could unambiguously discern a person’s intentions from the chattering electrical activity that they record in the brain, and then see that it matched the robotic arm’s actions, ethical concerns would be minimized. But this is not the case. The neural correlates of psychological phenomena are inexact and poorly understood, which means that signals from the brain are increasingly being processed by artificial intelligence (AI) software before reaching prostheses.[emphasis mine]

…

But, he [Philipp Kellmeyer, a neurologist and neuroethicist at the University of Freiburg, Germany] says, using AI tools also introduces ethical issues of which regulators have little experience. [emphasis mine] Machine-learning software learns to analyse data by generating algorithms that cannot be predicted and that are difficult, or impossible, to comprehend. This introduces an unknown and perhaps unaccountable process between a person’s thoughts and the technology that is acting on their behalf.

…

Maslen is already helping to shape BCI-device regulation. She is in discussion with the European Commission about regulations it will implement in 2020 that cover non-invasive brain-modulating devices that are sold straight to consumers. [emphases mine; Note: There is a Canadian company selling this type of product, MUSE] Maslen became interested in the safety of these devices, which were covered by only cursory safety regulations. Although such devices are simple, they pass electrical currents through people’s scalps to modulate brain activity. Maslen found reports of them causing burns, headaches and visual disturbances. She also says clinical studies have shown that, although non-invasive electrical stimulation of the brain can enhance certain cognitive abilities, this can come at the cost of deficits in other aspects of cognition.

Regarding my note about MUSE, the company is InteraXon and its product is MUSE.They advertise the product as “Brain Sensing Headbands That Improve Your Meditation Practice.” The company website and the product seem to be one entity, Choose Muse. The company’s product has been used in some serious research papers they can be found here. I did not see any research papers concerning safety issues.

Getting back to Drew’s July 24, 2019 article and Patient 6,

… He [Gilbert] is now preparing a follow-up report on Patient 6. The company that implanted the device in her brain to help free her from seizures went bankrupt. The device had to be removed.

… Patient 6 cried as she told Gilbert about losing the device. … “I lost myself,” she said.

“It was more than a device,” Gilbert says. “The company owned the existence of this new person.”

I strongly recommend reading Drew’s July 24, 2019 article in its entirety.

Finally

It’s easy to forget that in all the excitement over technologies ‘making our lives better’ that there can be a dark side or two. Some of the points brought forth in the articles by Wolbring, Underwood, and Drew confirmed my uneasiness as reasonable and gave me some specific examples of how these technologies raise new issues or old issues in new ways.

What I find interesting is that no one is using the term ‘cyborg’, which would seem quite applicable.There is an April 20, 2012 posting here titled ‘My mother is a cyborg‘ where I noted that by at lease one definition people with joint replacements, pacemakers, etc. are considered cyborgs. In short, cyborgs or technology integrated into bodies have been amongst us for quite some time.

Interestingly, no one seems to care much when insects are turned into cyborgs (can’t remember who pointed this out) but it is a popular area of research especially for military applications and search and rescue applications.

I’ve sometimes used the term ‘machine/flesh’ and or ‘augmentation’ as a description of technologies integrated with bodies, human or otherwise. You can find lots on the topic here however I’ve tagged or categorized it.

Amongst other pieces you can find here, there’s the August 8, 2016 posting, ‘Technology, athletics, and the ‘new’ human‘ featuring Oscar Pistorius when he was still best known as the ‘blade runner’ and a remarkably successful paralympic athlete. It’s about his efforts to compete against able-bodied athletes at the London Olympic Games in 2012. It is fascinating to read about technology and elite athletes of any kind as they are often the first to try out ‘enhancements’.

Gregor Wolbring has a number of essays on The Conversation looking at Paralympic athletes and their pursuit of enhancements and how all of this is affecting our notions of abilities and disabilities. By extension, one has to assume that ‘abled’ athletes are also affected with the trickle-down effect on the rest of us.

Regardless of where we start the investigation, there is a sameness to the participants in neuroethics discussions with a few experts and commercial interests deciding on how the rest of us (however you define ‘us’ as per Gregor Wolbring’s essay) will live.

This paucity of perspectives is something I was getting at in my COVID-19 editorial for the Canadian Science Policy Centre. My thesis being that we need a range of ideas and insights that cannot be culled from small groups of people who’ve trained and read the same materials or entrepreneurs who too often seem to put profit over thoughtful implementations of new technologies. (See the PDF May 2020 edition [you’ll find me under Policy Development]) or see my May 15, 2020 posting here (with all the sources listed.)

As for this new research at Stanford, it’s exciting news, which raises questions, as it offers the hope of independent movement for people diagnosed as tetraplegic (sometimes known as quadriplegic.)