While my main interest is the group’s temporary art gallery, I am providing a brief explanatory introduction and a couple of previews for SIGGRAPH 2018.

Introduction

For anyone unfamiliar with the Special Interest Group on Computer GRAPHics and Interactive Techniques (SIGGRAPH) and its conferences, from the SIGGRAPH Wikipedia entry Note: Links have been removed),

Some highlights of the conference are its Animation Theater and Electronic Theater presentations, where recently created CG films are played. There is a large exhibition floor, where several hundred companies set up elaborate booths and compete for attention and recruits. Most of the companies are in the engineering, graphics, motion picture, or video game industries. There are also many booths for schools which specialize in computer graphics or interactivity.

Dozens of research papers are presented each year, and SIGGRAPH is widely considered the most prestigious forum for the publication of computer graphics research.[1] The recent paper acceptance rate for SIGGRAPH has been less than 26%.[2] The submitted papers are peer-reviewed in a single-blind process.[3] There has been some criticism about the preference of SIGGRAPH paper reviewers for novel results rather than useful incremental progress.[4][5] …

This is the third SIGGRAPH Vancouver has hosted; the others were in 2011 and 2014. The theme for the 2018 iteration is ‘Generations’; here’s more about it from an Aug. 2, 2018 article by Terry Flores for Variety,

While its focus is firmly forward thinking, SIGGRAPH 2018, the computer graphics, animation, virtual reality, games, digital art, mixed reality, and emerging technologies conference, is also tipping its hat to the past thanks to its theme this year: Generations. The conference runs Aug. 12-16 in Vancouver, B.C.

“In the literal people sense, pioneers in the computer graphics industry are standing shoulder to shoulder with researchers, practitioners and the future of the industry — young people — mentoring them, dabbling across multiple disciplines to innovate, relate, and grow,” says SIGGRAPH 2018 conference chair Roy C. Anthony, VP of creative development and operations at software and technology firm Ventuz. “This is really what SIGGRAPH has always been about. Generations really seemed like a very appropriate way of looking back and remembering where we all came from and how far we’ve come.”

…

SIGGRAPH 2018 has a number of treats in store for attendees, including the debut of Disney’s first VR film, the short “Cycles”; production sessions on the making of “Blade Runner 2049,” “Game of Thrones,” “Incredibles 2” and “Avengers: Infinity War”; as well as sneak peeks of Disney’s upcoming “Ralph Breaks the Internet: Wreck-It Ralph 2” and Laika’s “Missing Link.”

That list of ‘treats’ in the last paragraph makes the conference seem more like an iteration of a ‘comic-con’ than a technology conference.

Previews

I have four items about work that will be presented at SIGGRAPH 2018, First up, something about ‘redirected walking’ from a June 18, 2018 Association for Computing Machinery news release on EurekAlert,

CHICAGO–In the burgeoning world of virtual reality (VR) technology, it remains a challenge to provide users with a realistic perception of infinite space and natural walking capabilities in the virtual environment. A team of computer scientists has introduced a new approach to address this problem by leveraging a natural human phenomenon: eye blinks.

All humans are functionally blind for about 10 percent of the time under normal circumstances due to eye blinks and saccades, a rapid movement of the eye between two points or objects. Eye blinks are a common and natural cause of so-called “change blindness,” which indicates the inability for humans to notice changes to visual scenes. Zeroing in on eye blinks and change blindness, the team has devised a novel computational system that effectively redirects the user in the virtual environment during these natural instances, all with undetectable camera movements to deliver orientation redirection.

“Previous RDW [redirected walking] techniques apply rotations continuously while the user is walking. But the amount of unnoticeable rotations is limited,” notes Eike Langbehn, lead author of the research and doctoral candidate at the University of Hamburg. “That’s why an orthogonal approach is needed–we add some additional rotations when the user is not focused on the visuals. When we learned that humans are functionally blind for some time due to blinks, we thought, ‘Why don’t we do the redirection during eye blinks?'”

Human eye blinks occur approximately 10 to 20 times per minute, about every 4 to 19 seconds. Leveraging this window of opportunity–where humans are unable to detect major motion changes while in a virtual environment–the researchers devised an approach to synchronize a computer graphics rendering system with this visual process, and introduce any useful motion changes in virtual scenes to enhance users’ overall VR experience.

The researchers’ experiments revealed that imperceptible camera rotations of 2 to 5 degrees and translations of 4 to 9 cm of the user’s viewpoint are possible during a blink without users even noticing. They tracked test participants’ eye blinks by an eye tracker in a VR head-mounted display. In a confirmatory study, the team validated that participants could not reliably detect in which of two eye blinks their viewpoint was manipulated while walking a VR curved path. The tests relied on unconscious natural eye blinking, but the researchers say redirection during blinking could be carried out consciously. Since users can consciously blink multiple times a day without much effort, eye blinks provide great potential to be used as an intentional trigger in their approach.

The team will present their work at SIGGRAPH 2018, held 12-16 August in Vancouver, British Columbia. The annual conference and exhibition showcases the world’s leading professionals, academics, and creative minds at the forefront of computer graphics and interactive techniques.

“RDW is a big challenge since current techniques still need too much space to enable unlimited walking in VR,” notes Langbehn. “Our work might contribute to a reduction of space since we found out that unnoticeable rotations of up to five degrees are possible during blinks. This means we can improve the performance of RDW by approximately 50 percent.”

The team’s results could be used in combination with other VR research, such as novel steering algorithms, improved path prediction, and rotations during saccades, to name a few. Down the road, such techniques could some day enable consumer VR users to virtually walk beyond their living room.

Langbehn collaborated on the work with Frank Steinicke of University of Hamburg, Markus Lappe of University of Muenster, Gregory F. Welch of University of Central Florida, and Gerd Bruder, also of University of Central Florida. For the full paper and video, visit the team’s project page.

###

About ACM, ACM SIGGRAPH, and SIGGRAPH 2018

ACM, the Association for Computing Machinery, is the world’s largest educational and scientific computing society, uniting educators, researchers, and professionals to inspire dialogue, share resources, and address the field’s challenges. ACM SIGGRAPH is a special interest group within ACM that serves as an interdisciplinary community for members in research, technology, and applications in computer graphics and interactive techniques. SIGGRAPH is the world’s leading annual interdisciplinary educational experience showcasing the latest in computer graphics and interactive techniques. SIGGRAPH 2018, marking the 45th annual conference hosted by ACM SIGGRAPH, will take place from 12-16 August at the Vancouver Convention Centre in Vancouver, B.C.

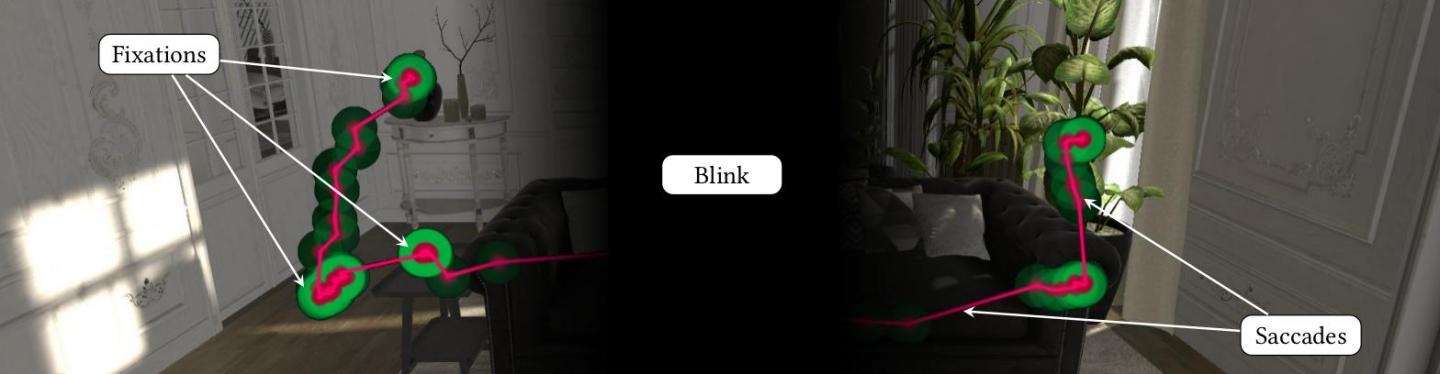

They have provided an image illustrating what they mean (I don’t find it especially informative),

Caption: The viewing behavior of a virtual reality user, including fixations (in green) and saccades (in red). A blink fully suppresses visual perception. Credit: Eike Langbehn

Next up (2), there’s Disney Corporation’s first virtual reality (VR) short, from a July 19, 2018 Association for Computing Machinery news release on EurekAlert,

Walt Disney Animation Studios will debut its first ever virtual reality short film at SIGGRAPH 2018, and the hope is viewers will walk away feeling connected to the characters as equally as they will with the VR technology involved in making the film.

Cycles, an experimental film directed by Jeff Gipson, centers around the true meaning of creating a home and the life it holds inside its walls. The idea for the film is personal, inspired by Gipson’s childhood spending time with his grandparents and creating memories in their home, and later, having to move them to an assisted living residence.

“Every house has a story unique to the people, the characters who live there,” says Gipson. “We wanted to create a story in this single place and be able to have the viewer witness life happening around them. It is an emotionally driven film, expressing the real ups and downs, the happy and sad moments in life.”

For Cycles, Gipson also drew from his past life as an architect, having spent several years designing skate parks, and from his passion for action sports, including freestyle BMX. In Los Angeles, where Gipson lives, it is not unusual to find homes with an empty swimming pool reserved for skating or freestyle biking. Part of the pitch for Cycles came out of Gipson’s experience riding in these empty pools and being curious about the homes attached to them, the families who lived there, and the memories they made.

SIGGRAPH attendees will have the opportunity to experience Cycles at the Immersive Pavilion, a new space for this year’s conference. The Pavilion is devoted exclusively to virtual, augmented, and mixed reality and will contain: the VR Theater, a storytelling extravaganza that is part of the Computer Animation Festival; the Vrcade, a space for VR, AR, and MR games or experiences; and the well-known Village, for showcasing large-scale projects. SIGGRAPH 2018, held 12-16 August in Vancouver, British Columbia, is an annual gathering that showcases the world’s leading professionals, academics, and creative minds at the forefront of computer graphics and interactive techniques.

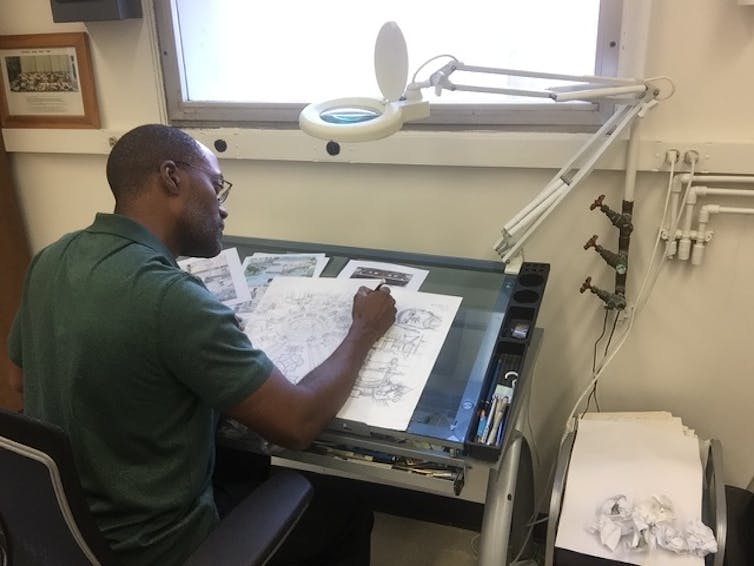

The production team completed Cycles in four months with about 50 collaborators as part of a professional development program at the studio. A key difference in VR filmmaking includes getting creative with how to translate a story to the VR “screen.” Pre-visualizing the narrative, for one, was a challenge. Rather than traditional storyboarding, Gipson and his team instead used a mix of Quill VR painting techniques and motion capture to “storyboard” Cycles, incorporating painters and artists to generate sculptures or 3D models of characters early on and draw scenes for the VR space. The creators also got innovative with the use of light and color saturation in scenes to help guide the user’s eyes during the film.

“What’s cool for VR is that we are really on the edge of trying to figure out what it is and how to tell stories in this new medium,” says Gipson. “In VR, you can look anywhere and really be transported to a different world, experience it from different angles, and see every detail. We want people watching to feel alive and feel emotion, and give them a true cinematic experience.”

This is Gipson’s VR directorial debut. He joined Walt Disney Animation Studios in 2013, serving as a lighting artist on Disney favorites like Frozen, Zootopia, and Moana. Of getting to direct the studio’s first VR short, he says, “VR is an amazing technology and a lot of times the technology is what is really celebrated. We hope more and more people begin to see the emotional weight of VR films, and with Cycles in particular, we hope they will feel the emotions we aimed to convey with our story.”

Apparently this is a still from the ‘short’,

Caption: Disney Animation Studios will present ‘Cycles’ , its first virtual reality (VR) short, at ACM SIGGRAPH 2018. Credit: Disney Animation Studios

There’s also something (3) from Google as described in a July 26, 2018 Association of Computing Machinery news release on EurekAlert,

Google has unveiled a new virtual reality (VR) immersive experience based on a novel system that captures and renders high-quality, realistic images from the real world using light fields. Created by a team of leading researchers at Google, Welcome to Light Fields is the tech giant’s splash into the nascent arena of light fields VR experiences, an exciting corner of VR video technology gaining traction for its promise to deliver extremely high-quality imagery and experiences in the virtual world.

Google released Welcome to Light Fields earlier this year as a free app on Steam VR for HTC Vive, Oculus Rift, and Windows Mixed Reality headsets. The creators will demonstrate the VR experience at SIGGRAPH 2018, in the Immersive Pavilion, a new space for this year’s conference. The Pavilion is devoted exclusively to virtual, augmented, and mixed reality and will contain: the Vrcade, a space for VR, AR, and MR games or experiences; the VR Theater, a storytelling extravaganza that is part of the Computer Animation Festival; and the well-known Village, for showcasing large-scale projects. SIGGRAPH 2018, held 12-16 August in Vancouver, British Columbia, is an annual gathering that showcases the world’s leading professionals, academics, and creative minds at the forefront of computer graphics and interactive techniques.

Destinations in Welcome to Light Fields include NASA’s Space Shuttle Discovery, delivering to viewers an astronaut’s view inside the flight deck, which has never been open to the public; the pristine teak and mahogany interiors of the Gamble House, an architectural treasure in Pasadena, CA; and the glorious St. Stephen’s Church in Granada Hills, CA, home to a stunning wall of more than 14,000 pieces of glimmering stained glass.

“I love that light fields in VR can teleport you to exotic places in the real world, and truly make you believe you are there,” says Ryan Overbeck, software engineer at Google who co-led the project. “To me, this is magic.”

To bring this experience to life, Overbeck worked with a team that included Paul Debevec, senior staff engineer at Google, who managed the project and led the hardware piece with engineers Xueming Yu, Jay Busch, and Graham Fyffe. With Overbeck, Daniel Erickson and Daniel Evangelakos focused on the software end. The researchers designed a comprehensive system for capturing and rendering high-quality, spherical light field still images from footage captured in the real world. They developed two easy-to-use light field camera rigs, based on the GoPro Hero4action sports camera, that efficiently capture thousands of images on the surface of a sphere. Those images were then passed through a cloud-based light-field-processing pipeline.

Among other things, explains Overbeck, “The processing pipeline uses computer vision to place the images in 3D and generate depth maps, and we use a modified version of our vp9 video codec

to compress the light field data down to a manageable size.” To render a light field dataset, he notes, the team used a rendering algorithm that blends between the thousands of light field images in real-time.

The team relied on Google’s talented pool of engineers in computer vision, graphics, video compression, and machine learning to overcome the unique challenges posed in light fields technology. They also collaborated closely with the WebM team (who make the vp9 video codec) to develop the high-quality light field compression format incorporated into their system, and leaned heavily on the expertise of the Jump VR team to help pose the images and generate depth maps. (Jump is Google’s professional VR system for achieving 3D-360 video production at scale.)

Indeed, with Welcome to Light Fields, the Google team is demonstrating the potential and promise of light field VR technology, showcasing the technology’s ability to provide a truly immersive experience with a level of unmatched realism. Though light fields technology has been researched and explored in computer graphics for more than 30 years, practical systems for actually delivering high-quality light field experiences has not yet been possible.

Part of the team’s motivation behind creating this VR light field experience is to invigorate the nascent field.

“Welcome to Light Fields proves that it is now possible to make a compelling light field VR viewer that runs on consumer-grade hardware, and we hope that this knowledge will encourage others to get involved with building light field technology and media,” says Overbeck. “We understand that in order to eventually make compelling consumer products based on light fields, we need a thriving light field ecosystem. We need open light field codecs, we need artists creating beautiful light field imagery, and we need people using VR in order to engage with light fields.”

I don’t really understand why this image, which looks like something belongs on advertising material, would be chosen to accompany a news release on a science-based distribution outlet,

Caption: A team of leading researchers at Google, will unveil the new immersive virtual reality (VR) experience “Welcome to Lightfields” at ACM SIGGRAPH 2018. Credit: Image courtesy of Google/Overbeck

Finally (4), ‘synthesizing realistic sounds’ is announced in an Aug. 6, 2018 Stanford University (US) news release (also on EurekAlert) by Taylor Kubota,

Advances in computer-generated imagery have brought vivid, realistic animations to life, but the sounds associated with what we see simulated on screen, such as two objects colliding, are often recordings. Now researchers at Stanford University have developed a system that automatically renders accurate sounds for a wide variety of animated phenomena.

“There’s been a Holy Grail in computing of being able to simulate reality for humans. We can animate scenes and render them visually with physics and computer graphics, but, as for sounds, they are usually made up,” said Doug James, professor of computer science at Stanford University. “Currently there exists no way to generate realistic synchronized sounds for complex animated content, such as splashing water or colliding objects, automatically. This fills that void.”

The researchers will present their work on this sound synthesis system as part of ACM SIGGRAPH 2018, the leading conference on computer graphics and interactive techniques. In addition to enlivening movies and virtual reality worlds, this system could also help engineering companies prototype how products would sound before being physically produced, and hopefully encourage designs that are quieter and less irritating, the researchers said.

“I’ve spent years trying to solve partial differential equations – which govern how sound propagates – by hand,” said Jui-Hsien Wang, a graduate student in James’ lab and in the Institute for Computational and Mathematical Engineering (ICME), and lead author of the paper. “This is actually a place where you don’t just solve the equation but you can actually hear it once you’ve done it. That’s really exciting to me and it’s fun.”

Predicting sound

Informed by geometry and physical motion, the system figures out the vibrations of each object and how, like a loudspeaker, those vibrations excite sound waves. It computes the pressure waves cast off by rapidly moving and vibrating surfaces but does not replicate room acoustics. So, although it does not recreate the echoes in a grand cathedral, it can resolve detailed sounds from scenarios like a crashing cymbal, an upside-down bowl spinning to a stop, a glass filling up with water or a virtual character talking into a megaphone.

Most sounds associated with animations rely on pre-recorded clips, which require vast manual effort to synchronize with the action on-screen. These clips are also restricted to noises that exist – they can’t predict anything new. Other systems that produce and predict sounds as accurate as those of James and his team work only in special cases, or assume the geometry doesn’t deform very much. They also require a long pre-computation phase for each separate object.

“Ours is essentially just a render button with minimal pre-processing that treats all objects together in one acoustic wave simulation,” said Ante Qu, a graduate student in James’ lab and co-author of the paper.

The simulated sound that results from this method is highly detailed. It takes into account the sound waves produced by each object in an animation but also predicts how those waves bend, bounce or deaden based on their interactions with other objects and sound waves in the scene.

Challenges ahead

In its current form, the group’s process takes a while to create the finished product. But, now that they have proven this technique’s potential, they can focus on performance optimizations, such as implementing their method on parallel GPU hardware, that should make it drastically faster.

And, even in its current state, the results are worth the wait.

“The first water sounds we generated with the system were among the best ones we had simulated – and water is a huge challenge in computer-generated sound,” said James. “We thought we might get a little improvement, but it is dramatically better than previous approaches even right out of the box. It was really striking.”

Although the group’s work has faithfully rendered sounds of various objects spinning, falling and banging into each other, more complex objects and interactions – like the reverberating tones of a Stradivarius violin – remain difficult to model realistically. That, the group said, will have to wait for a future solution.

Timothy Langlois of Adobe Research is a co-author of this paper. This research was funded by the National Science Foundation and Adobe Research. James is also a professor, by courtesy, of music and a member of Stanford Bio-X.

Researchers Timothy Langlois, Doug L. James, Ante Qu and Jui-Hsien Wang have created a video featuring highlights of animations with sounds synthesized using the Stanford researchers’ new system.,

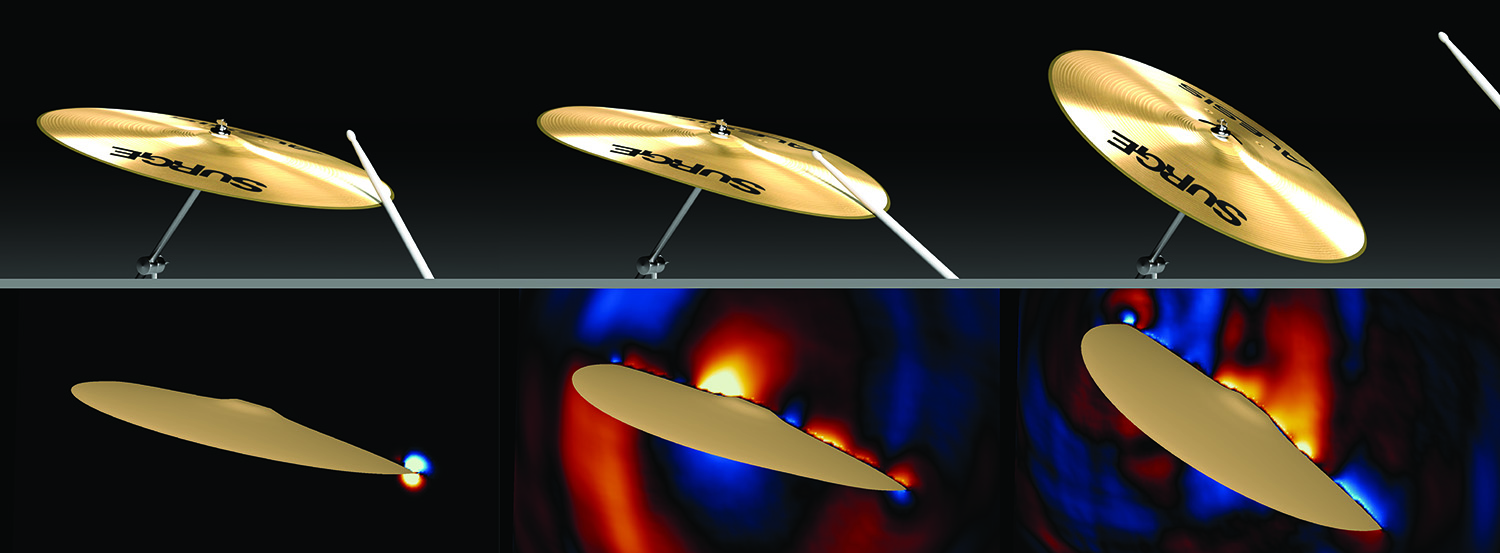

The researchers have also provided this image,

By computing pressure waves cast off by rapidly moving and vibrating surfaces – such as a cymbal – a new sound synthesis system developed by Stanford researchers can automatically render realistic sound for computer animations. (Image credit: Timothy Langlois, Doug L. James, Ante Qu and Jui-Hsien Wang)

It does seem like we’re synthesizing the world around us, eh?

The SIGGRAPH 2018 art gallery

Here’s what SIGGRAPH had to say about its 2018 art gallery in Vancouver and the themes for the conference and the gallery (from a May 18, 2018 Associating for Computing Machinery news release on globalnewswire.com (also on this 2018 SIGGRAPH webpage),

SIGGRAPH 2018, the world’s leading showcase of digital art created using computer graphics and interactive techniques, will present a special Art Gallery, entitled “Origins,” and historic Art Papers in Vancouver, B.C. The 45th SIGGRAPH conference will take place 12–16 August at the Vancouver Convention Centre. The programs will also honor the generations of creators that have come before through a special, 50th anniversary edition of the Leonard journal. To register for the conference, visit S2018.SIGGRAPH.ORG.

The SIGGRAPH 2018 ART GALLERY is a curated exhibition, conceived as a dialogical space that enables the viewer to reflect on man’s diverse cultural values and rituals through contemporary creative practices. Building upon an exciting and eclectic selection of creative practices mediated through technologies that represent the sophistication of our times, the SIGGRAPH 2018 Art Gallery will embrace the narratives of the indigenous communities based near Vancouver and throughout Canada as a source of inspiration. The exhibition will feature contemporary media artworks, art pieces by indigenous communities, and other traces of technologically mediated Ludic practices.

Andrés Burbano, SIGGRAPH 2018 Art Gallery chair and professor at Universidad de los Andes, said, “The Art Gallery aims to articulate myth and technology, science and art, the deep past and the computational present, and will coalesce around a theme of ‘Origins.’ Media and technological creative expressions will explore principles such as the origins of the cosmos, the origins of life, the origins of human presence, the origins of the occupation of territories in the Americas, and the origins of people living in the vast territories of the Arctic.”

He continued, “The venue [in Vancouver] hopes to rekindle the original spark that ignited the collaborative spirit of the SIGGRAPH community of engineers, scientists, and artists, who came together to create the very first conference in the early 1970s.”

Highlights from the 2018 Art Gallery include:

Transformation Mask (Canada) [Technology Based]

Shawn Hunt, independent; and Microsoft Garage: Andy Klein, Robert Butterworth, Jonathan Cobb, Jeremy Kersey, Stacey Mulcahy, Brendan O’Rourke, Brent Silk, and Julia Taylor-Hell, Microsoft VancouverTRANSFORMATION MASK is an interactive installation that features the Microsoft HoloLens. It utilizes electronics and mechanical engineering to express a physical and digital transformation. Participants are immersed in spatial sounds and holographic visuals.

Somnium (U.S.) [Science Based]

Marko Peljhan, Danny Bazo, and Karl Yerkes, University of California, Santa BarbaraSomnium is a cybernetic installation that provides visitors with the ability to sensorily, cognitively, and emotionally contemplate and experience exoplanetary discoveries, their macro and micro dimensions, and the potential for life in our Galaxy. Some might call it “space telescope.”

Ernest Edmonds Retrospective – Art Systems 1968-2018 (United Kingdom) [History Based]

Ernest Edmonds, De Montfort UniversityCelebrating one of the pioneers of computer graphics-based art since the early 1970s, this Ernest Edmonds career retrospective will showcase snapshots of Edmonds’ work as it developed over the years. With one piece from each decade, the retrospective will also demonstrate how vital the Leonardo journal has been throughout the 50-year journey.

In addition to the works above, the Art Gallery will feature pieces from notable female artists Ozge Samanci, Ruth West, and Nicole L’Hullier. For more information about the Edmonds retrospective, read THIS POST ON THE ACM SIGGRAPH BLOG.

The SIGGRAPH 2018 ART PAPERS program is designed to feature research from artists, scientists, theorists, technologists, historians, and more in one of four categories: project description, theory/criticism, methods, or history. The chosen work was selected by an international jury of scholars, artists, and immersive technology developers.

To celebrate the 50th anniversary of LEONARDO (MIT Press), and 10 years of its annual SIGGRAPH issue, SIGGRAPH 2018 is pleased to announce a special anniversary edition of the journal, which will feature the 2018 art papers. For 50 years, Leonardo has been the definitive publication for artist-academics. To learn more about the relationship between SIGGRAPH and the journal, listen to THIS EPISODE OF THE SIGGRAPH SPOTLIGHT PODCAST.

“In order to encourage a wider range of topics, we introduced a new submission type, short papers. This enabled us to accept more content than in previous years. Additionally, for the first time, we will introduce sessions that integrate the Art Gallery artist talks with Art Papers talks, promoting richer connections between these two creative communities,” said Angus Forbes, SIGGRAPH 2018 Art Papers chair and professor at University of California, Santa Cruz.

Art Papers highlights include:

Alienating the Familiar with CGI: A Recipe for Making a Full CGI Art House Animated Feature [Long]

Alex Counsell and Paul Charisse, University of PortsmouthThis paper explores the process of making and funding an art house feature film using full CGI in a marketplace where this has never been attempted. It explores cutting-edge technology and production approaches, as well as routes to successful fundraising.

Augmented Fauna and Glass Mutations: A Dialogue Between Material and Technique in Glassblowing and 3D Printing [Long]

Tobias Klein, City University of Hong KongThe two presented artworks, “Augmented Fauna” and “Glass Mutations,” were created during an artist residence at the PILCHUCK GLASS SCHOOL. They are examples of the qualities and methods established through a synthesis between digital workflows and traditional craft processes and thus formulate the notion of digital craftsmanship.

Inhabitat: An Imaginary Ecosystem in a Children’s Science Museum [Short]

Graham Wakefield, York University, and Haru Hyunkyung Ji, OCAD University“Inhabitat” is a mixed reality artwork in which participants become part of an imaginary ecology through three simultaneous perspectives of scale and agency; three distinct ways to see with other eyes. This imaginary world was exhibited at a children’s science museum for five months, using an interactive projection-augmented sculpture, a large screen and speaker array, and a virtual reality head-mounted display.

What’s the what?

My father used to say that and I always assumed it meant summarize the high points, if you need to, and get to the point—fast. In that spirit, I am both fascinated and mildly appalled. The virtual, mixed, and augmented reality technologies, as well as, the others being featured at SIGGRAPH 2018 are wondrous in many ways but it seems we are coming ever closer to a world where we no longer interact with nature or other humans directly. (see my August 10, 2018 posting about the ‘extinction of experience’ for research that encourages more direct interaction with nature) I realize that SIGGRAPH is intended as a primarily technical experience but I think a little more content questioning these technologies and their applications (social implications) might be in order. That’s often the artist’s role but I can’t see anything in the art gallery descriptions that hint at any sort of fundamental critique.