At least once a year I highlight some work about frogs. It’s usually about a new species but this time, it’s all about frog sounds (as well as, sounds from other amphibians).

Caption: The calls of the midwife toad and other amphibians have served to test the sound classifier. Credit: Jaime Bosch (MNCN-CSIC)

In any event, here’s more from an April 30, 2018 Spanish Foundation for Science and Technology (FECYT) press release (also on EurekAlert but with a May 17, 2018 publication date),

The sounds of amphibians are altered by the increase in ambient temperature, a phenomenon that, in addition to interfering with reproductive behaviour, serves as an indicator of global warming. Researchers at the University of Seville have resorted to artificial intelligence to create an automatic classifier of the thousands of frog and toad sounds that can be recorded in a natural environment.

One of the consequences of climate change is its impact on the physiological functions of animals, such as frogs and toads with their calls. Their mating call, which plays a crucial role in the sexual selection and reproduction of these amphibians, is affected by the increase in ambient temperature.

When this exceeds a certain threshold, the physiological processes associated with the sound production are restricted, and some calls are even actually inhibited. In fact, the beginning, duration and intensity of calls from the male to the female are changed, which influences reproductive activity.

Taking into account this phenomenon, the analysis and classification of the sounds produced by certain species of amphibians and other animals have turned out to be a powerful indicator of temperature fluctuations and, therefore, of the existence and evolution of global warming.

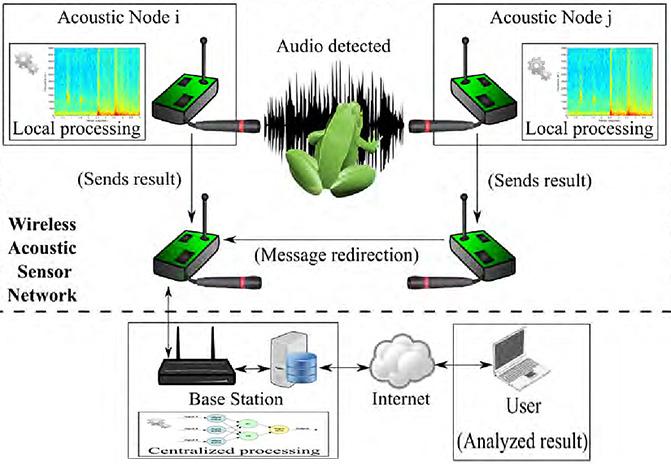

To capture the sounds of frogs, networks of audio sensors are placed and connected wirelessly in areas that can reach several hundred square kilometres. The problem is that a huge amount of bio-acoustic information is collected in environments as noisy as a jungle, and this makes it difficult to identify the species and their calls.

To solve this, engineers from the University of Seville have resorted to artificial intelligence. “We’ve segmented the sound into temporary windows or audio frames and have classified them by means of decision trees, an automatic learning technique that is used in computing”, explains Amalia Luque Sendra, co-author of the work.

To perform the classification, the researchers have based it on MPEG-7 parameters and audio descriptors, a standard way of representing audiovisual information. The details are published in Expert Systems with Applications magazine.

This technique has been put to the test with real sounds of amphibians recorded in the middle of nature and provided by the National Museum of Natural Sciences. More specifically, 868 records with 369 mating calls sung by the male and 63 release calls issued by the female natterajck toad (Epidalea calamita), along with 419 mating calls and 17 distress calls of the common midwife toad (Alytesobstetricans).

“In this case we obtained a success rate close to 90% when classifying the sounds,” observes Luque Sendra, who recalls that, in addition to the types of calls, the number of individuals of certain amphibian species that are heard in a geographical region over time can also be used as an indicator of climate change.

“A temperature increase affects the calling patterns,” she says, “but since these in most cases have a sexual calling nature, they also affect the number of individuals. With our method, we still can’t directly determine the exact number of specimens in an area, but it is possible to get a first approximation.”

In addition to the image of the midwife toad, the researchers included this image to illustrate their work,

Caption: This is the architecture of a wireless sensor network. Credit: J. Luque et al./Sensors

Here’s a link to and a citation for the paper,

Non-sequential automatic classification of anuran sounds for the estimation of climate-change indicators by Amalia Luque, Javier Romero-Lemos, Alejandro Carrasco, Julio Barbancho. Expert Systems with Applications Volume 95, 1 April 2018, Pages 248-260 DOI: https://doi.org/10.1016/j.eswa.2017.11.016 Available online 10 November 2017

This paper is open access.