A living, breathing supercomputer is a bit mind-boggling but scientists at McGill University (Canada) and their international colleagues have created a working model according to a Feb. 26, 2016 McGill University news release on EurekAlert (and received via email), Note: A link has been removed,

The substance that provides energy to all the cells in our bodies, Adenosine triphosphate (ATP), may also be able to power the next generation of supercomputers. That is what an international team of researchers led by Prof. Nicolau, the Chair of the Department of Bioengineering at McGill, believe. They’ve published an article on the subject earlier this week in the Proceedings of the National Academy of Sciences (PNAS), in which they describe a model of a biological computer that they have created that is able to process information very quickly and accurately using parallel networks in the same way that massive electronic super computers do.

Except that the model bio supercomputer they have created is a whole lot smaller than current supercomputers, uses much less energy, and uses proteins present in all living cells to function.

Doodling on the back of an envelope

“We’ve managed to create a very complex network in a very small area,” says Dan Nicolau, Sr. with a laugh. He began working on the idea with his son, Dan Jr., more than a decade ago and was then joined by colleagues from Germany, Sweden and The Netherlands, some 7 years ago [there is also one collaborator from the US according the journal’s [PNAS] list of author affiliations, read on for the link to the paper]. “This started as a back of an envelope idea, after too much rum I think, with drawings of what looked like small worms exploring mazes.”

The model bio-supercomputer that the Nicolaus (father and son) and their colleagues have created came about thanks to a combination of geometrical modelling and engineering knowhow (on the nano scale). It is a first step, in showing that this kind of biological supercomputer can actually work.

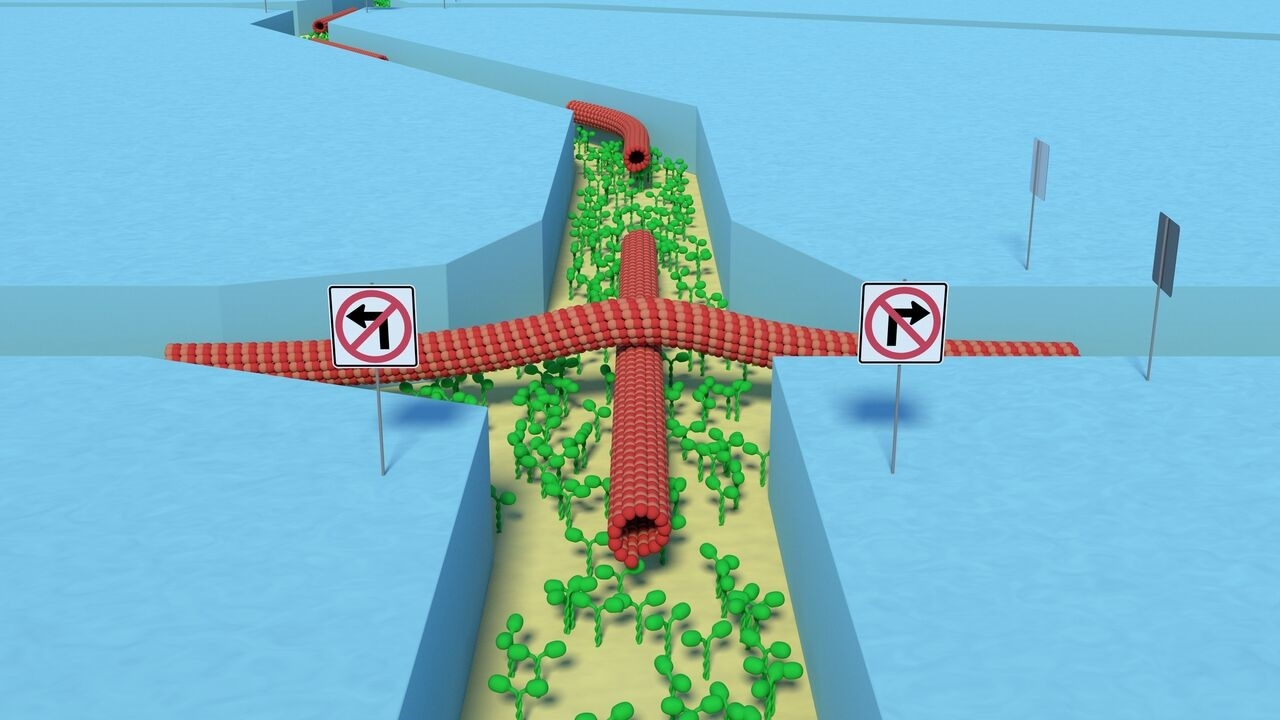

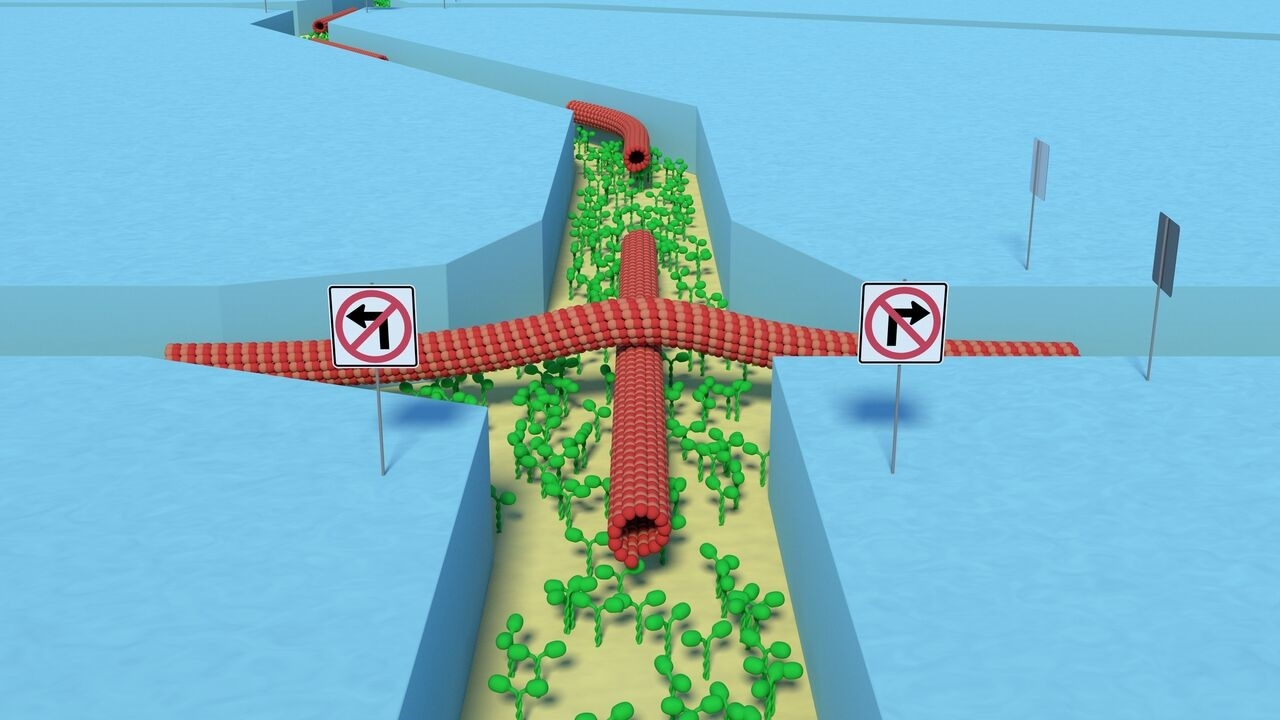

The circuit the researchers have created looks a bit like a road map of a busy and very organized city as seen from a plane. Just as in a city, cars and trucks of different sizes, powered by motors of different kinds, navigate through channels that have been created for them, consuming the fuel they need to keep moving.

More sustainable computing

But in the case of the biocomputer, the city is a chip measuring about 1.5 cm square in which channels have been etched. Instead of the electrons that are propelled by an electrical charge and move around within a traditional microchip, short strings of proteins (which the researchers call biological agents) travel around the circuit in a controlled way, their movements powered by ATP, the chemical that is, in some ways, the juice of life for everything from plants to politicians.

Because it is run by biological agents, and as a result hardly heats up at all, the model bio-supercomputer that the researchers have developed uses far less energy than standard electronic supercomputers do, making it more sustainable. Traditional supercomputers use so much electricity that they heat up a lot and then need to be cooled down, often requiring their own power plant to function.

Moving from model to reality

Although the model bio supercomputer was able to very efficiently tackle a complex classical mathematical problem by using parallel computing of the kind used by supercomputers, the researchers recognize that there is still a lot of work ahead to move from the model they have created to a full-scale functional computer.

”Now that this model exists as a way of successfully dealing with a single problem, there are going to be many others who will follow up and try to push it further, using different biological agents, for example,” says Nicolau. “It’s hard to say how soon it will be before we see a full scale bio super-computer. One option for dealing with larger and more complex problems may be to combine our device with a conventional computer to form a hybrid device. Right now we’re working on a variety of ways to push the research further.”

What was once the stuff of science fiction, is now just science.

The funding for this project is interesting,

This research was funded by: The European Union Seventh Framework Programme; [US] Defense Advanced Research Projects Agency [DARPA]; NanoLund; The Miller Foundation; The Swedish Research Council; The Carl Trygger Foundation; the German Research Foundation; and by Linnaeus University.

I don’t see a single Canadian funding agency listed.

In any event, here’s a link to and a citation for the paper,

Parallel computation with molecular-motor-propelled agents in nanofabricated networks by Dan V. Nicolau, Jr., Mercy Lard, Till Kortend, Falco C. M. J. M. van Delft, Malin Persson, Elina Bengtsson, Alf Månsson, Stefan Diez, Heiner Linke, and Dan V. Nicolau. Proceedings of the National Academy of Sciences (PNAS): http://www.pnas.org/content/early/2016/02/17/1510825113

This paper appears to be open access.

Finally, the researchers have provided an image illustrating their work,

Caption: Strands of proteins of different lengths move around the chip in the bio computer in directed patterns, a bit like cars and trucks navigating the streets of a city. Credit: Till Korten

ETA Feb. 29 2016: Technical University Dresden’s Feb. 26, 2016 press release on EurekAlert also announces the bio-computer albeit from a rather different perspective,

The pioneering achievement was developed by researchers from the Technische Universität Dresden and the Max Planck Institute of Molecular Cell Biology and Genetics, Dresden in collaboration with international partners from Canada, England, Sweden, the US, and the Netherlands.

Conventional electronic computers have led to remarkable technological advances in the past decades, but their sequential nature -they process only one computational task at a time- prevents them from solving problems of combinatorial nature such as protein design and folding, and optimal network routing. This is because the number of calculations required to solve such problems grows exponentially with the size of the problem, rendering them intractable with sequential computing. Parallel computing approaches can in principle tackle such problems, but the approaches developed so far have suffered from drawbacks that have made up-scaling and practical implementation very difficult. The recently reported parallel-computing approach aims to address these issues by combining well established nanofabrication technology with molecular motors which are highly energy efficient and inherently work in parallel.

In this approach, which the researchers demonstrate on a benchmark combinatorial problem that is notoriously hard to solve with sequential computers, the problem to be solved is ‘encoded’ into a network of nanoscale channels (Fig. 1a). This is done, on the one hand by mathematically designing a geometrical network that is capable of representing the problem, and on the other hand by fabricating a physical network based on this design using so-called lithography, a standard chip-manufacturing technique.

The network is then explored in parallel by many protein filaments (here actin filaments or microtubules) that are self-propelled by a molecular layer of motor proteins (here myosin or kinesin) covering the bottom of the channels (Fig. 3a). The design of the network using different types of junctions automatically guides the filaments to the correct solutions to the problem (Fig. 1b). This is realized by different types of junctions, causing the filaments to behave in two different ways. As the filaments are rather rigid structures, turning to the left or right is only possible for certain angles of the crossing channels. By defining these options (‘split junctions’ Fig. 2a + 3b and ‘pass junctions’, Fig. 2b + 3c) the scientists achieved an ‘intelligent’ network giving the filaments the opportunity either to cross only straight or to decide between two possible channels with a 50/50 probability.

The time to solve combinatorial problems of size N using this parallel-computing approach scales approximately as N2, which is a dramatic improvement over the exponential (2N) time scales required by conventional, sequential computers. Importantly, the approach is fully scalable with existing technologies and uses orders of magnitude less energy than conventional computers, thus circumventing the heating issues that are currently limiting the performance of conventional computing.

The diagrams mentioned were not included with the press release.