I have one research announcement from China and another from the Netherlands, both of which concern memristors and oxides.

China

A May 17, 2021 news item on Nanowerk announces work, which suggests that memristors may not need to rely solely on oxides but could instead utilize light more gainfully,

Scientists are getting better at making neuron-like junctions for computers that mimic the human brain’s random information processing, storage and recall. Fei Zhuge of the Chinese Academy of Sciences and colleagues reviewed the latest developments in the design of these ‘memristors’ for the journal Science and Technology of Advanced Materials …

Computers apply artificial intelligence programs to recall previously learned information and make predictions. These programs are extremely energy- and time-intensive: typically, vast volumes of data must be transferred between separate memory and processing units. To solve this issue, researchers have been developing computer hardware that allows for more random and simultaneous information transfer and storage, much like the human brain.

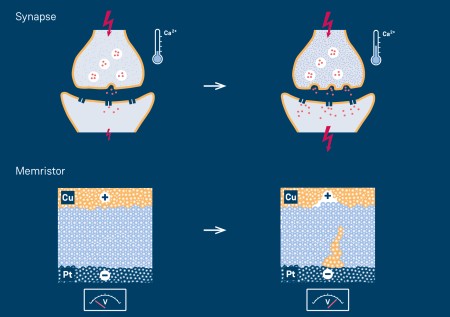

Electronic circuits in these ‘neuromorphic’ computers include memristors that resemble the junctions between neurons called synapses. Energy flows through a material from one electrode to another, much like a neuron firing a signal across the synapse to the next neuron. Scientists are now finding ways to better tune this intermediate material so the information flow is more stable and reliable.

…

I had no success locating the original news release, which originated the news item, but have found this May 17, 2021 news item on eedesignit.com, which provides the remaining portion of the news release.

“Oxides are the most widely used materials in memristors,” said Zhuge. “But oxide memristors have unsatisfactory stability and reliability. Oxide-based hybrid structures can effectively improve this.”

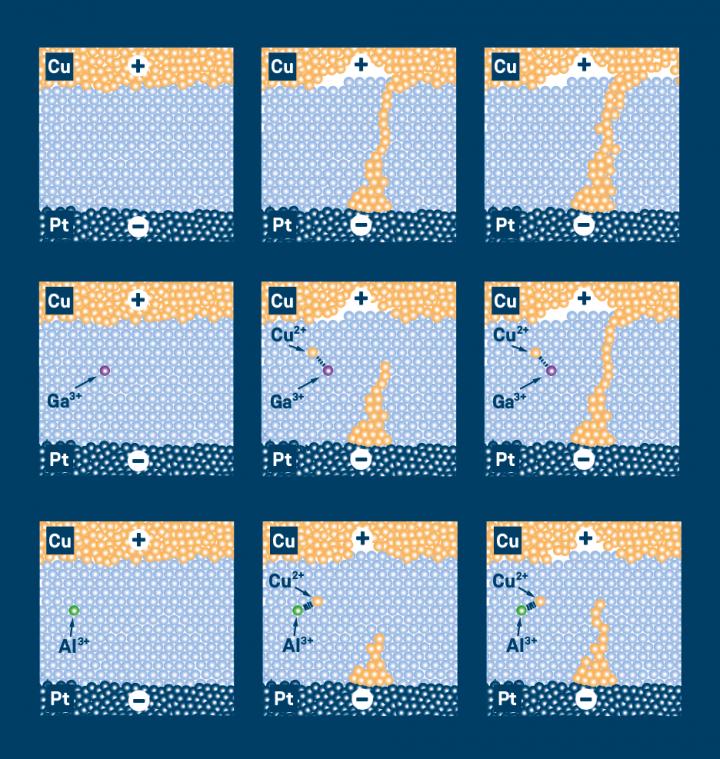

Memristors are usually made of an oxide-based material sandwiched between two electrodes. Researchers are getting better results when they combine two or more layers of different oxide-based materials between the electrodes. When an electrical current flows through the network, it induces ions to drift within the layers. The ions’ movements ultimately change the memristor’s resistance, which is necessary to send or stop a signal through the junction.

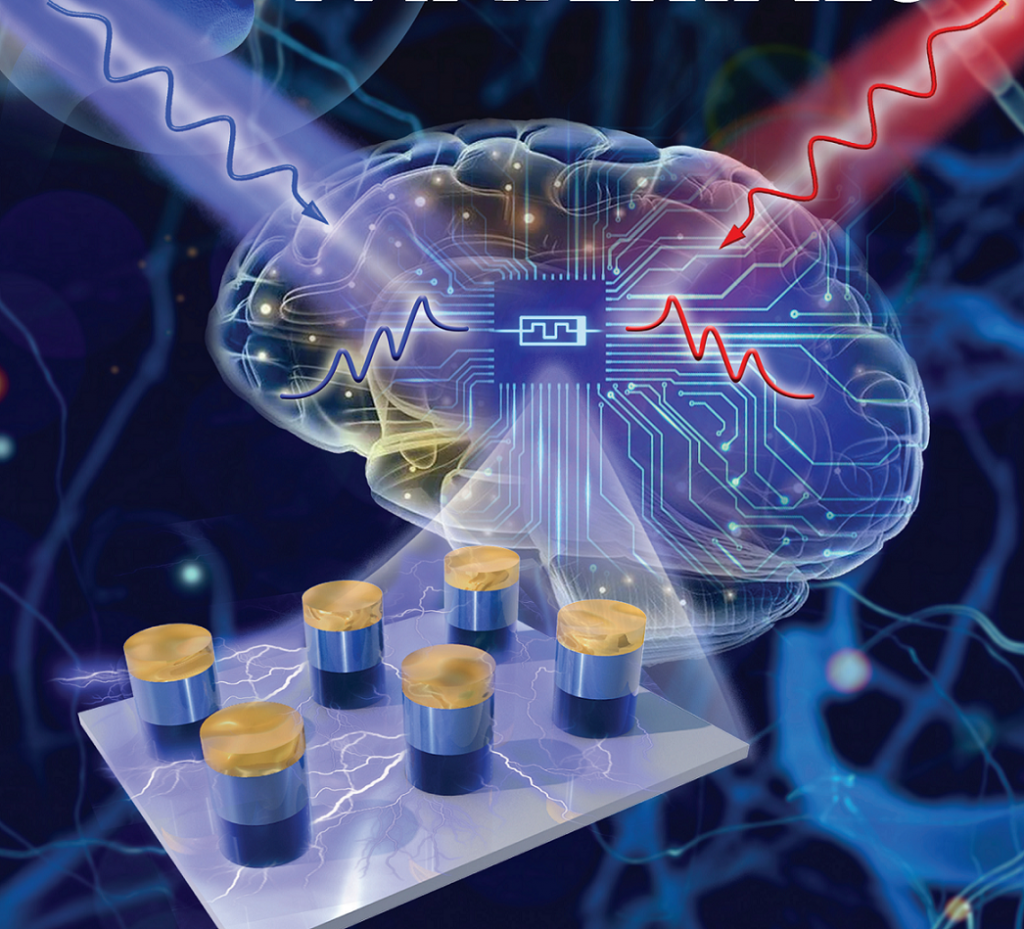

Memristors can be tuned further by changing the compounds used for electrodes or by adjusting the intermediate oxide-based materials. Zhuge and his team are currently developing optoelectronic neuromorphic computers based on optically-controlled oxide memristors. Compared to electronic memristors, photonic ones are expected to have higher operation speeds and lower energy consumption. They could be used to construct next generation artificial visual systems with high computing efficiency.

Now for a picture that accompanied the news release, which follows,

Here’s the February 7, 2021 Ningbo Institute of Materials Technology and Engineering (NIMTE) press release featuring this work and a more technical description,

A research group led by Prof. ZHUGE Fei at the Ningbo Institute of Materials Technology and Engineering (NIMTE) of the Chinese Academy of Sciences (CAS) developed an all-optically controlled (AOC) analog memristor, whose memconductance can be reversibly tuned by varying only the wavelength of the controlling light.

As the next generation of artificial intelligence (AI), neuromorphic computing (NC) emulates the neural structure and operation of the human brain at the physical level, and thus can efficiently perform multiple advanced computing tasks such as learning, recognition and cognition.

Memristors are promising candidates for NC thanks to the feasibility of high-density 3D integration and low energy consumption. Among them, the emerging optoelectronic memristors are competitive by virtue of combining the advantages of both photonics and electronics. However, the reversible tuning of memconductance depends highly on the electric excitation, which have severely limited the development and application of optoelectronic NC.

To address this issue, researchers at NIMTE proposed a bilayered oxide AOC memristor, based on the relatively mature semiconductor material InGaZnO and a memconductance tuning mechanism of light-induced electron trapping and detrapping.

The traditional electrical memristors require strong electrical stimuli to tune their memconductance, leading to high power consumption, a large amount of Joule heat, microstructural change triggered by the Joule heat, and even high crosstalk in memristor crossbars.

On the contrary, the developed AOC memristor does not involve microstructure changes, and can operate upon weak light irradiation with light power density of only 20 μW cm-2, which has provided a new approach to overcome the instability of the memristor.

Specifically, the AOC memristor can serve as an excellent synaptic emulator and thus mimic spike-timing-dependent plasticity (STDP) which is an important learning rule in the brain, indicating its potential applications in AOC spiking neural networks for high-efficiency optoelectronic NC.

Moreover, compared to purely optical computing, the optoelectronic computing using our AOC memristor showed higher practical feasibility, on account of the simple structure and fabrication process of the device.

The study may shed light on the in-depth research and practical application of optoelectronic NC, and thus promote the development of the new generation of AI.

This work was supported by the National Natural Science Foundation of China (No. 61674156 and 61874125), the Strategic Priority Research Program of Chinese Academy of Sciences (No. XDB32050204), and the Zhejiang Provincial Natural Science Foundation of China (No. LD19E020001).

Here’s a link to and a citation for the paper,

Hybrid oxide brain-inspired neuromorphic devices for hardware implementation of artificial intelligence by Jingrui Wang, Xia Zhuge & Fei Zhuge. Science and Technology of Advanced Materials Volume 22, 2021 – Issue 1 Pages 326-344 DOI: https://doi.org/10.1080/14686996.2021.1911277 Published online:14 May 2021

This paper appears to be open access.

Netherlands

In this case, a May 18, 2021 news item on Nanowerk marries oxides to spintronics,

Classic computers use binary values (0/1) to perform. By contrast, our brain cells can use more values to operate, making them more energy-efficient than computers. This is why scientists are interested in neuromorphic (brain-like) computing.

Physicists from the University of Groningen (the Netherlands) have used a complex oxide to create elements comparable to the neurons and synapses in the brain using spins, a magnetic property of electrons.

…

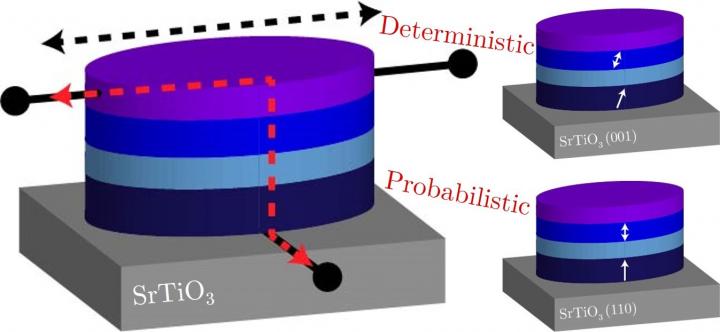

The press release, which follows, was accompanied by this image illustrating the work,

A May 18, 2021 University of Groningen press release (also on EurekAlert), which originated the news item, adds more ‘spin’ to the story,

Although computers can do straightforward calculations much faster than humans, our brains outperform silicon machines in tasks like object recognition. Furthermore, our brain uses less energy than computers. Part of this can be explained by the way our brain operates: whereas a computer uses a binary system (with values 0 or 1), brain cells can provide more analogue signals with a range of values.

Thin films

The operation of our brains can be simulated in computers, but the basic architecture still relies on a binary system. That is why scientist look for ways to expand this, creating hardware that is more brain-like, but will also interface with normal computers. ‘One idea is to create magnetic bits that can have intermediate states’, says Tamalika Banerjee, Professor of Spintronics of Functional Materials at the Zernike Institute for Advanced Materials, University of Groningen. She works on spintronics, which uses a magnetic property of electrons called ‘spin’ to transport, manipulate and store information.

In this study, her PhD student Anouk Goossens, first author of the paper, created thin films of a ferromagnetic metal (strontium-ruthenate oxide, SRO) grown on a substrate of strontium titanate oxide. The resulting thin film contained magnetic domains that were perpendicular to the plane of the film. ‘These can be switched more efficiently than in-plane magnetic domains’, explains Goossens. By adapting the growth conditions, it is possible to control the crystal orientation in the SRO. Previously, out-of-plane magnetic domains have been made using other techniques, but these typically require complex layer structures.

Magnetic anisotropy

The magnetic domains can be switched using a current through a platinum electrode on top of the SRO. Goossens: ‘When the magnetic domains are oriented perfectly perpendicular to the film, this switching is deterministic: the entire domain will switch.’ However, when the magnetic domains are slightly tilted, the response is probabilistic: not all the domains are the same, and intermediate values occur when only part of the crystals in the domain have switched.

By choosing variants of the substrate on which the SRO is grown, the scientists can control its magnetic anisotropy. This allows them to produce two different spintronic devices. ‘This magnetic anisotropy is exactly what we wanted’, says Goossens. ‘Probabilistic switching compares to how neurons function, while the deterministic switching is more like a synapse.’

The scientists expect that in the future, brain-like computer hardware can be created by combining these different domains in a spintronic device that can be connected to standard silicon-based circuits. Furthermore, probabilistic switching would also allow for stochastic computing, a promising technology which represents continuous values by streams of random bits. Banerjee: ‘We have found a way to control intermediate states, not just for memory but also for computing.’

Here’s a link to and a citation for the paper,

Anisotropy and Current Control of Magnetization in SrRuO3/SrTiO3 Heterostructures for Spin-Memristors by A.S. Goossens, M.A.T. Leiviskä and T. Banerjee. Frontiers in Nanotechnology DOI: https://doi.org/10.3389/fnano.2021.680468 Published: 18 May 2021

This appears to be open access.