Thank goodness for the March 15, 2018 notice from the Art/Sci Salon in Toronto (received via email) announcing an event on smart cities being held in the nearby city of Vaughan (it borders Toronto to the north). It’s led me on quite the chase as I’ve delved into a reference to Smart City projects taking place across the country and the results follow after this bit about the event.

smARTcities SALON

From the announcement,

SMARTCITIES SALON

Smart City projects are currently underway across the country, including

Google SideWalk at Toronto Harbourfront. Canada’s first Smart Hospital

is currently under construction in the City of Vaughan. It’s an example

of the city working towards building a reputation as one of the world’s

leading Smart Cities, by adopting new technologies consistent with

priorities defined by citizen collaboration.Hon. Maurizio Bevilacqua, P.C., Mayor chairs the Smart City Advisory

Task Force leading historic transformation in Vaughan. Working to become

a Smart City is a chance to encourage civic engagement, accelerate

economic growth, and generate efficiencies. His opening address will

outline some of the priorities and opportunities that our panel will

discuss.PANELISTS

Lilian Radovac, PhD., Assistant Professor, Institute of Communication,

Culture, Information & Technology, University of Toronto. Lilian is a

historian of urban sounds and cultures and has a critical interest in

SmartCity initiatives in two of the cities she has called home: New York

City and Toronto..Oren Berkovich is the CEO of Singularity University in Canada, an

educational institution and a global network of experts and

entrepreneurs that work together on solving the world’s biggest

challenges. As a catalyst for long-term growth Oren spends his time

connecting people with ideas to facilitate strategic conversations about

the future.Frank Di Palma, the Chief Information Officer for the City of Vaughan,

is a graduate of York University with more than 20 years experience in

IT operations and services. Frank leads the many SmartCity initiatives

already underway at Vaughan City Hall.Ron Wild, artist and Digital Art/Science Collaborator, will moderate the

discussion.Audience Participation opportunities will enable attendees to forward

questions for consideration by the panel.…

You can register for the smARTcities SALON here on Eventbrite,

Art Exhibition Reception

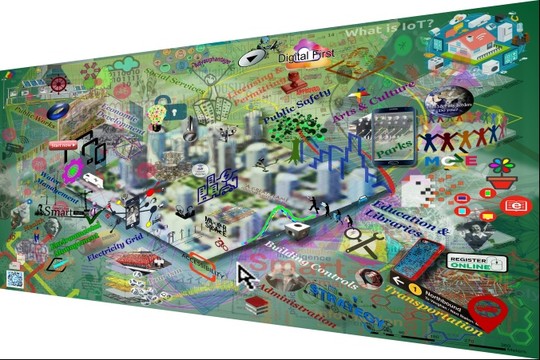

Following the panel discussion, the audience is invited to view the art exhibition ‘smARTcities; exploring the digital frontier.’ Works commissioned by Vaughan specifically for the exhibition, including the SmartCity Map and SmartHospital Map will be shown as well as other Art/Science-themed works. Many of these ‘maps’ were made by Ron in collaboration with mathematicians, scientists, and medical researchers, some of who will be in attendance. Further examples of Ron’s art can be found HERE

Please click through to buy a FREE ticket so we know how many guests to expect. Thank you.

This event can be reached by taking the subway up the #1 west line to the new Vaughan Metropolitan Centre terminal station. Take the #20 bus to the Vaughan Mills transfer loop; transfer there to the #4/A which will take you to the stop right at City Hall. Free parking is available for those coming by car. Car-pooling and ride-sharing is encouraged. The facility is fully accessible.

Here’s one of Wild’s pieces,

144×96″ triptych, Vaughan, 2018 Artist: mrowade (Ron Wild?)

I’m pretty sure that mrowade is Ron Wild.

Smart Cities, the rest of the country, and Vancouver

Much to my surprise, I covered the ‘Smart Cities’ story in its early (but not earliest) days (and before it was Smart Cities) in two posts: January 30, 2015 and January 27,2016 about the National Research Council of Canada (NRC) and its cities and technology public engagement exercises.

David Vogt in a July 12, 2016 posting on the Urban Opus website provides some catch up information,

Canada’s National Research Council (NRC) has identified Cities of the Future as a game-changing technology and economic opportunity. Following a national dialogue, an Executive Summit was held in Toronto on March 31, 2016, resulting in an important summary report that will become the seed for Canadian R&D strategy in this sector.

The conclusion so far is that the opportunity for Canada is to muster leadership in the following three areas (in order):

- Better Infrastructure and Infrastructure Management

- Efficient Transportation; and

- Renewable Energy

…

The National Research Council (NRC) offers a more balanced view of the situation on its “NRC capabilities in smart infrastructure and cities of the future” webpage,

Key opportunities for Canada

North America is one of the most urbanised regions in the world (82 % living in urban areas in 2014).

With growing urbanisation, sustainable development challenges will be increasingly concentrated in cities, requiring technology solutions.

Smart cities are data-driven, relying on broadband and telecommunications, sensors, social media, data collection and integration, automation, analytics and visualization to provide real-time situational analysis.

Most infrastructure will be “smart” by 2030 and transportation systems will be intelligent, adaptive and connected.

Renewable energy, energy storage, power quality and load measurement will contribute to smart grid solutions that are integrated with transportation.

“Green”, sustainable and high-performing construction and infrastructure materials are in demand.Canadian challenges

High energy use: Transportation accounts for roughly 23% of Canada’s total greenhouse gas emissions, followed closely by the energy consumption of buildings, which accounts for 12% of Canada’s greenhouse gas emissions (Canada’s United Nations Framework Convention on Climate Change report).

Traffic congestion in Canadian cities is increasing, contributing to loss of productivity, increased stress for citizens as well as air and noise pollution.

Canadian cities are susceptible to extreme weather and events related to climate change (e.g., floods, storms).

Changing demographics: aging population (need for accessible transportation options, housing, medical and recreational services) and diverse (immigrant) populations.

Financial and jurisdictional issues: the inability of municipalities (who have primary responsibility) to finance R&D or large-scale solutions without other government assistance.…

Opportunities being examined

Living labTest bed for smart city technology in order to quantify and demonstrate the benefits of smart cities.

Multiple partnering opportunities (e.g. municipalities, other government organizations, industry associations, universities, social sciences, urban planning).The integrated city

Efficient transportation: integration of personal mobility and freight movement as key city and inter-city infrastructure.

Efficient and integrated transportation systems linked to city infrastructure.

Planning urban environments for mobility while repurposing redundant infrastructures (converting parking to the food-water-energy nexus) as population shifts away from personal transportation.FOOD-WATER-ENERGY NEXUS

Sustainable urban bio-cycling.

System approach to the development of the technology platforms required to address the nexus.…

Key enabling platform technologies

Artificial intelligenceComputer vision and image understanding

Adaptive robots; future robotic platforms for part manufacturing

Understanding human emotions from language

Next generation information extraction using deep learning

Speech recognition

Artificial intelligence to optimize talent management for human resourcesNanomaterials

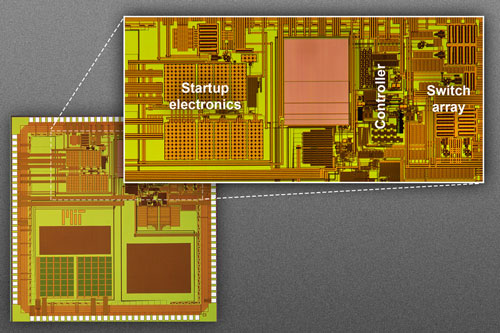

Nanoelectronics

Nanosensing

Smart materials

Nanocomposites

Self-assembled nanostructures

Nanoimprint

Nanoplasmonic

Nanoclay

NanocoatingBig data analytics

Predictive equipment maintenance

Energy management

Artificial intelligence for optimizing energy storage and distribution

Understanding and tracking of hazardous chemical elements

Process and design optimizationPrinted electronics for Internet of Things

Inks and materials

Printing technologies

Large area, flexible, stretchable, printed electronics components

Applications: sensors for Internet of Things, wearables, antenna, radio-frequency identification tags, smart surfaces, packaging, security, signage…

If you’re curious about the government’s plan with regard to implementation, this NRC webpage provides some fascinating insight into their hopes if not the reality. (I have mentioned artificial intelligence and the federal government before in a March 16, 2018 posting about the federal budget and science; scroll down approximately 50% of the way to the subsection titled, Budget 2018: Who’s watching over us? and scan for Michael Karlin’s name.)

As for the current situation, there’s a Smart Cities Challenge taking place. Both Toronto and Vancouver have webpages dedicated to their response to the challenge. (You may want to check your own city’s website to find if it’s participating.)I have a preference for the Toronto page as they immediately state that they’re participating in this challenge and they provide an explanation for what they want from you. Vancouver’s page is by comparison a bit confusing with two videos being immediately presented to the reader and from there too many graphics competing for your attention. They do, however, offer something valuable, links to explanations for smart cities and for the challenge.

Here’s a description of the Smart Cities Challenge (from its webpage),

The Smart Cities Challenge

The Smart Cities Challenge is a pan-Canadian competition open to communities of all sizes, including municipalities, regional governments and Indigenous communities (First Nations, Métis and Inuit). The Challenge encourages communities to adopt a smart cities approach to improve the lives of their residents through innovation, data and connected technology.

- One prize of up to $50 million open to all communities, regardless of population;

- Two prizes of up to $10 million open to all communities with populations under 500,000 people; and

- One prize of up to $5 million open to all communities with populations under 30,000 people.

Infrastructure Canada is engaging Indigenous leaders, communities and organizations to finalize the design of a competition specific to Indigenous communities that will reflect their unique realities and issues. Indigenous communities are also eligible to compete for all the prizes in the current competition.

The Challenge will be an open and transparent process. Communities that submit proposals will also post them online, so that residents and stakeholders can see them. An independent Jury will be appointed to select finalists and winners.

Applications are due by April 24, 2018. Communities interested in participating should visit the

Impact Canada Challenge Platform for the applicant guide and more information.

Finalists will be announced in the Summer of 2018 and winners in Spring 2019 according to the information on the Impact Canada Challenge Platform.

It’s not clear to me if she’s leading Vancouver’s effort to win the Smart Cities Challenge but Jessie Adcock’s (City of Vancouver Chief Digital Officer) Twitter feed certainly features information on the topic and, I suspect, if you’re looking for the most up-to-date information on Vancovuer’s participation, you’re more likely to find it on her feed than on the City of Vancouver’s Smart Cities Challenge webpage.